8 Advanced parallelization - Deep Learning with JAX

Por um escritor misterioso

Descrição

Using easy-to-revise parallelism with xmap() · Compiling and automatically partitioning functions with pjit() · Using tensor sharding to achieve parallelization with XLA · Running code in multi-host configurations

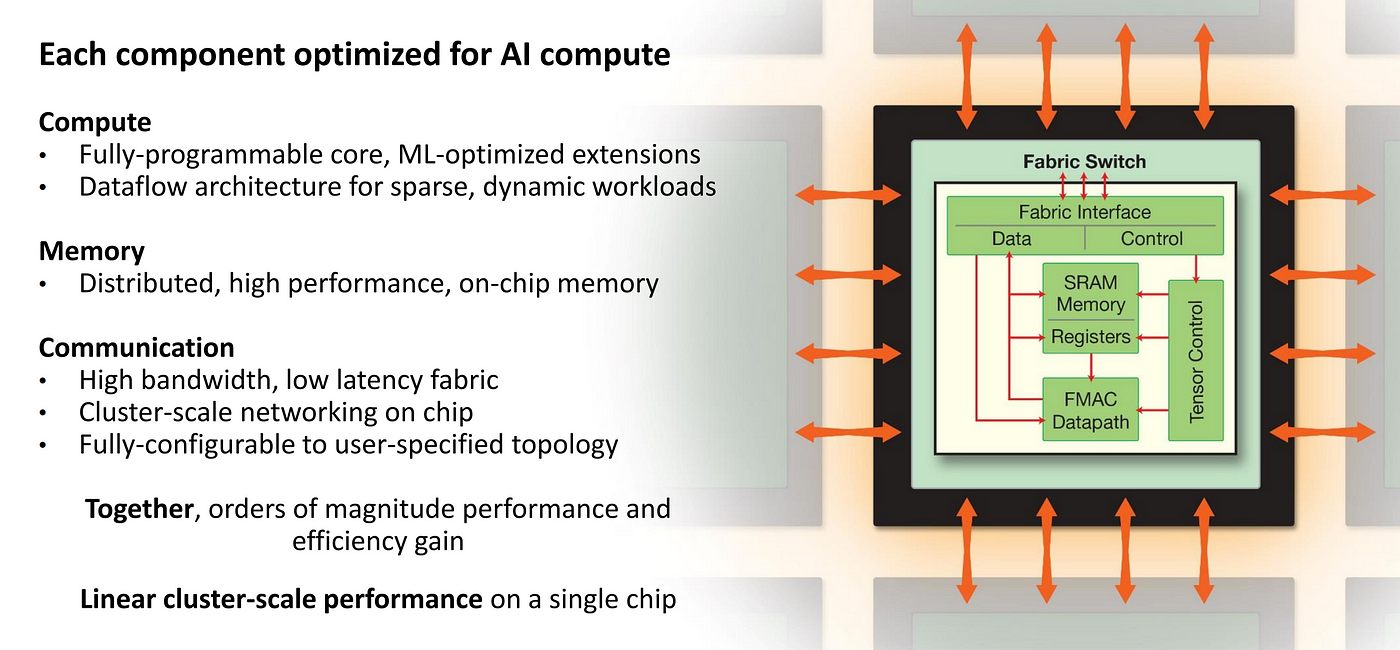

Hardware for Deep Learning. Part 4: ASIC

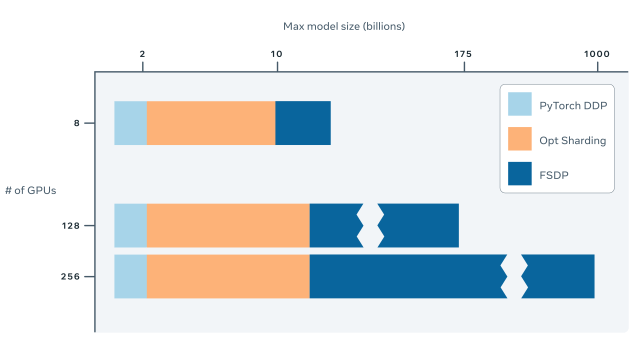

Fully Sharded Data Parallel: faster AI training with fewer GPUs

Tutorial 6 (JAX): Transformers and Multi-Head Attention — UvA DL

Top 11 Machine Learning Software - Learn before you regret

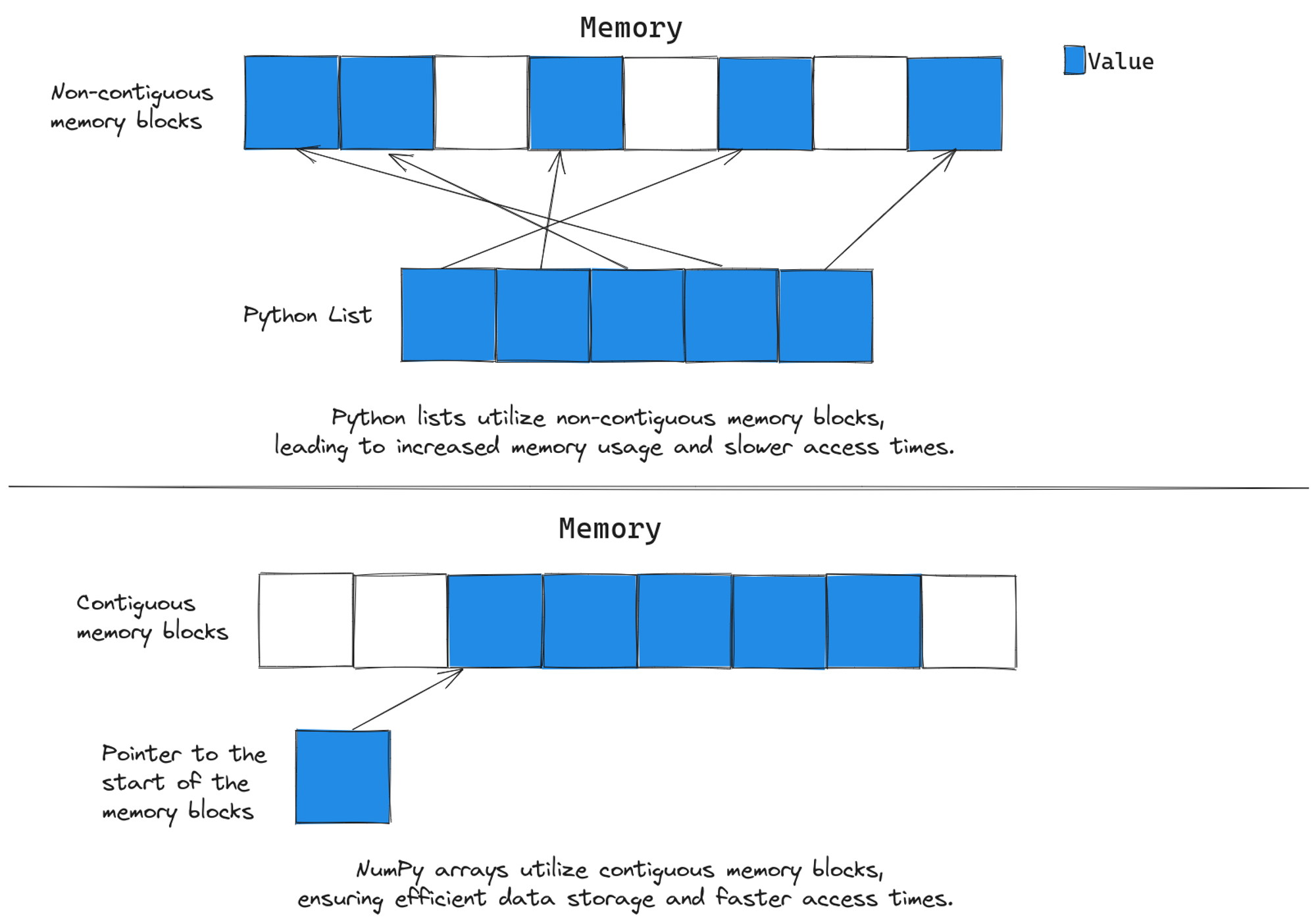

Breaking Up with NumPy: Why JAX is Your New Favorite Tool

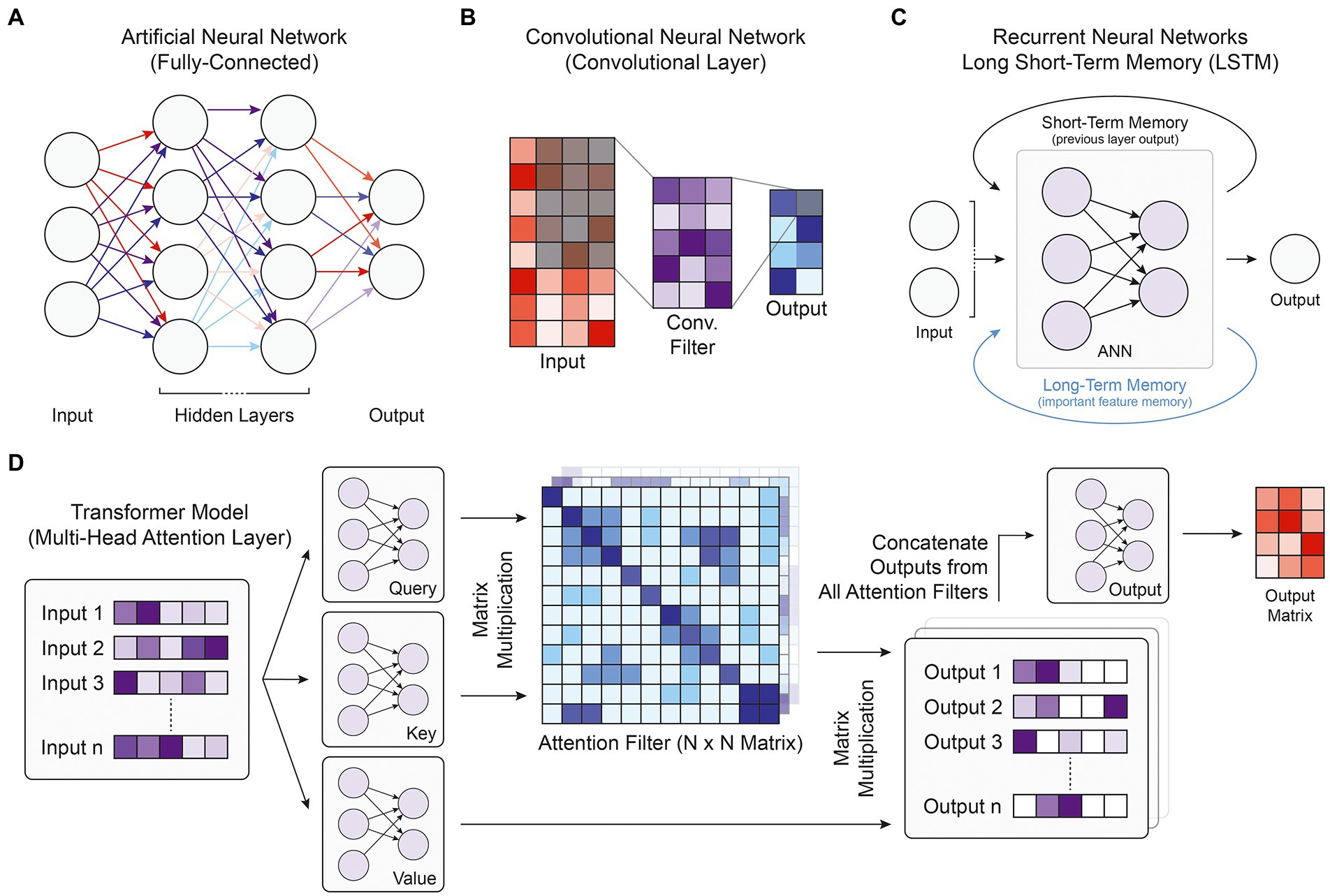

Frontiers Deep learning approaches for noncoding variant

Writing a Training Loop in JAX and Flax

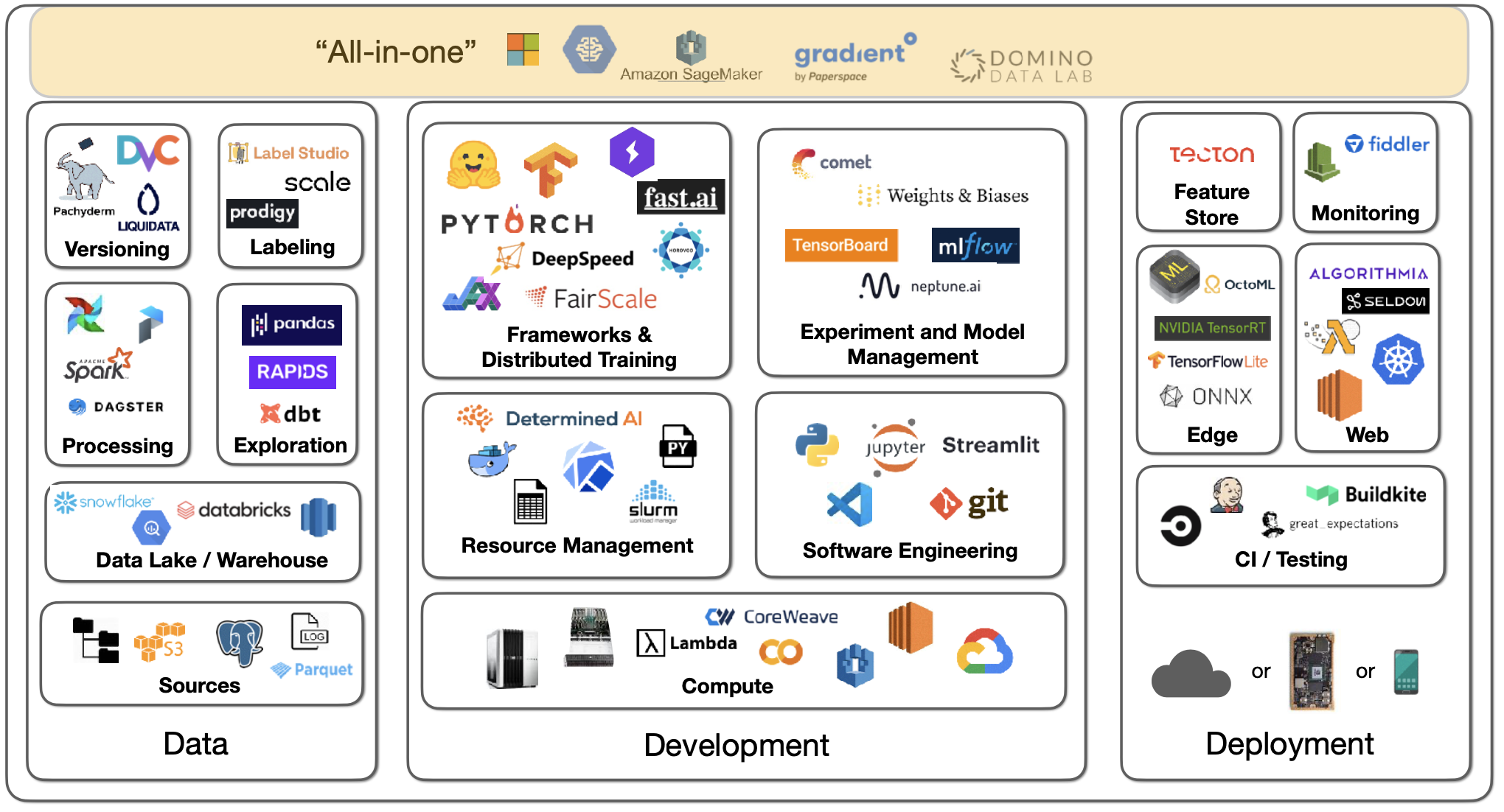

Lecture 2: Development Infrastructure & Tooling - The Full Stack

Efficiently Scale LLM Training Across a Large GPU Cluster with

Using JAX to accelerate our research - Google DeepMind

GitHub - che-shr-cat/JAX-in-Action: Notebooks for the JAX in

Breaking Up with NumPy: Why JAX is Your New Favorite Tool

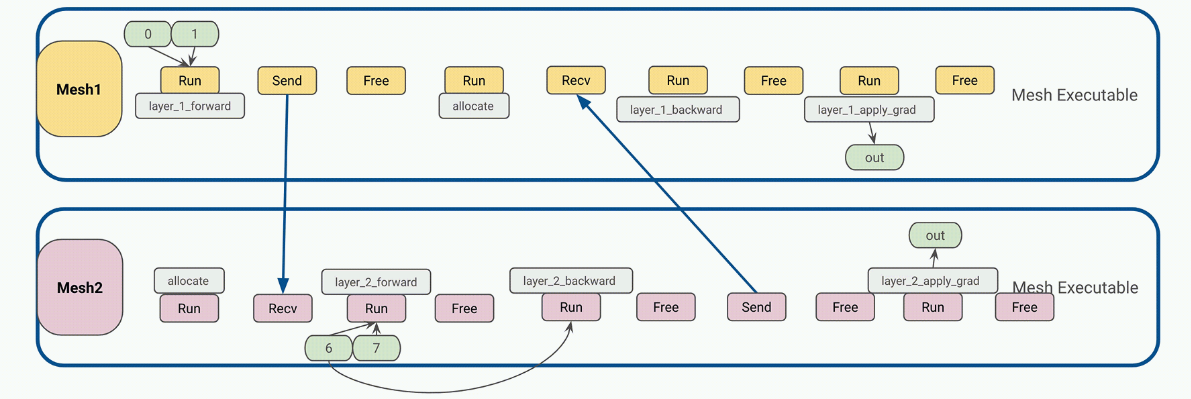

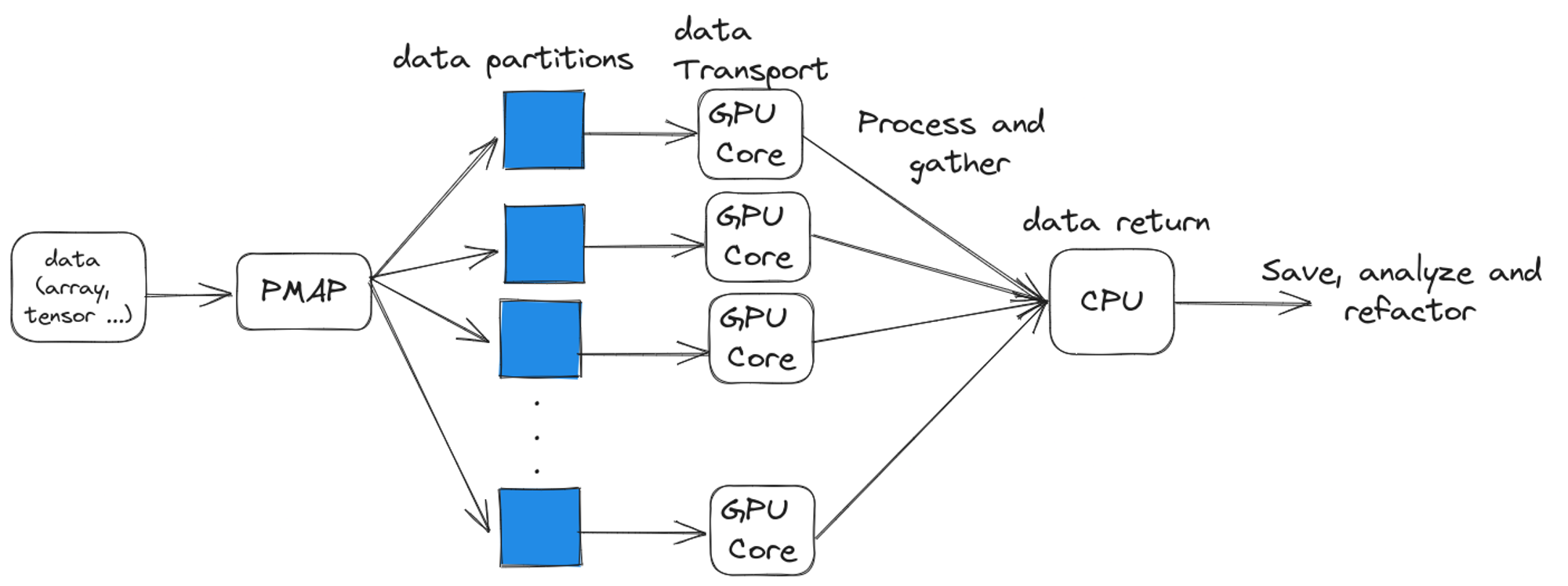

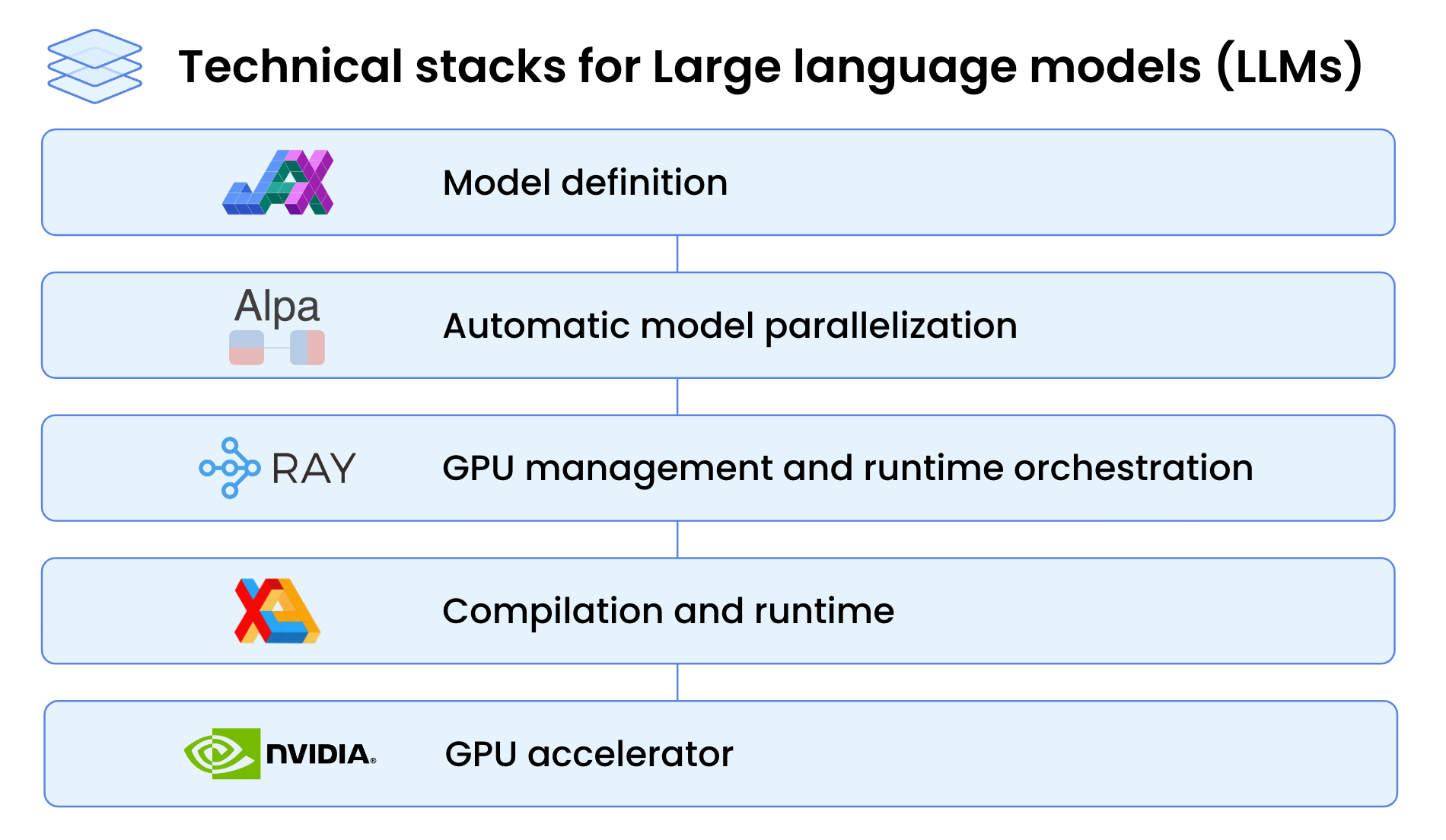

High-Performance LLM Training at 1000 GPU Scale With Alpa & Ray

Compiler Technologies in Deep Learning Co-Design: A Survey

8 Advanced parallelization - Deep Learning with JAX

de

por adulto (o preço varia de acordo com o tamanho do grupo)