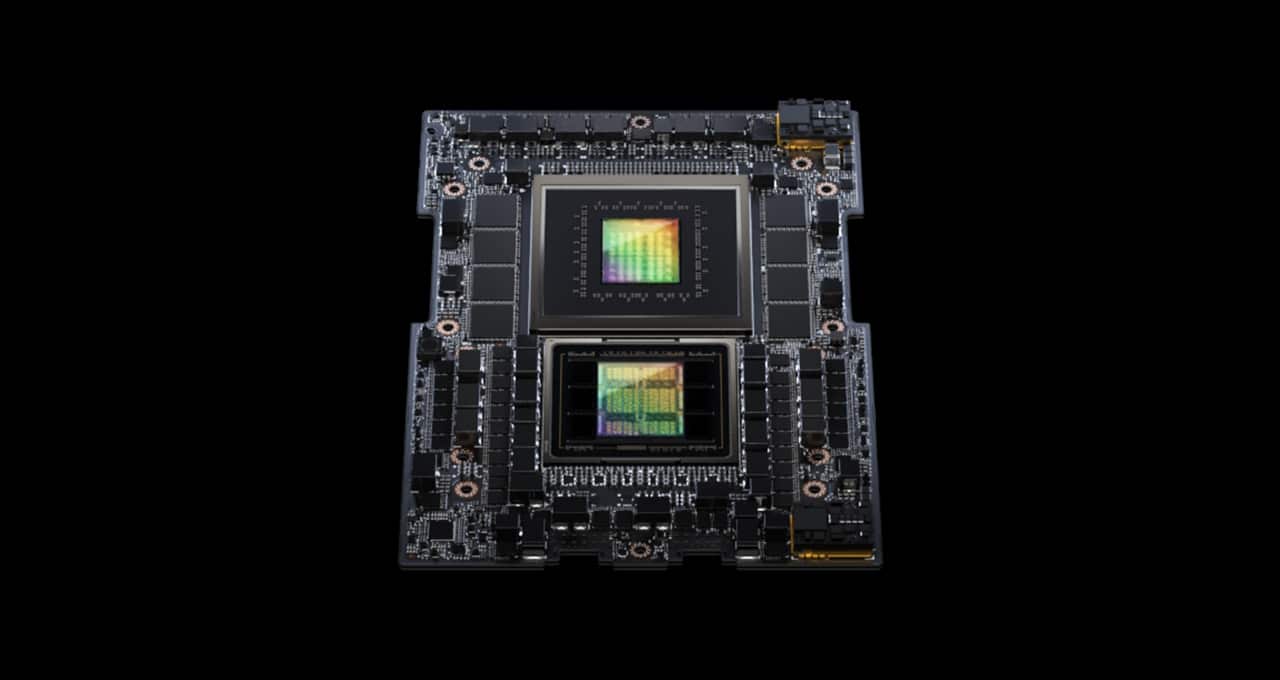

NVIDIA Grace Hopper Superchip Sweeps MLPerf Inference Benchmarks

Por um escritor misterioso

Descrição

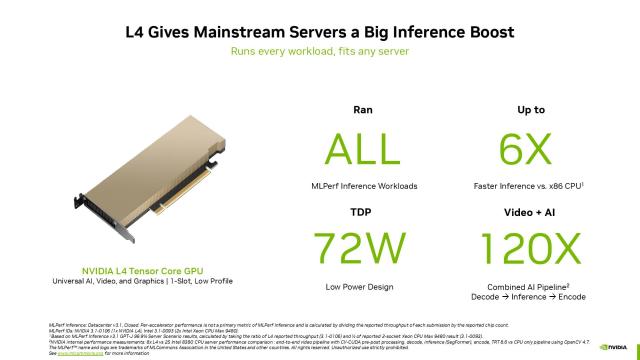

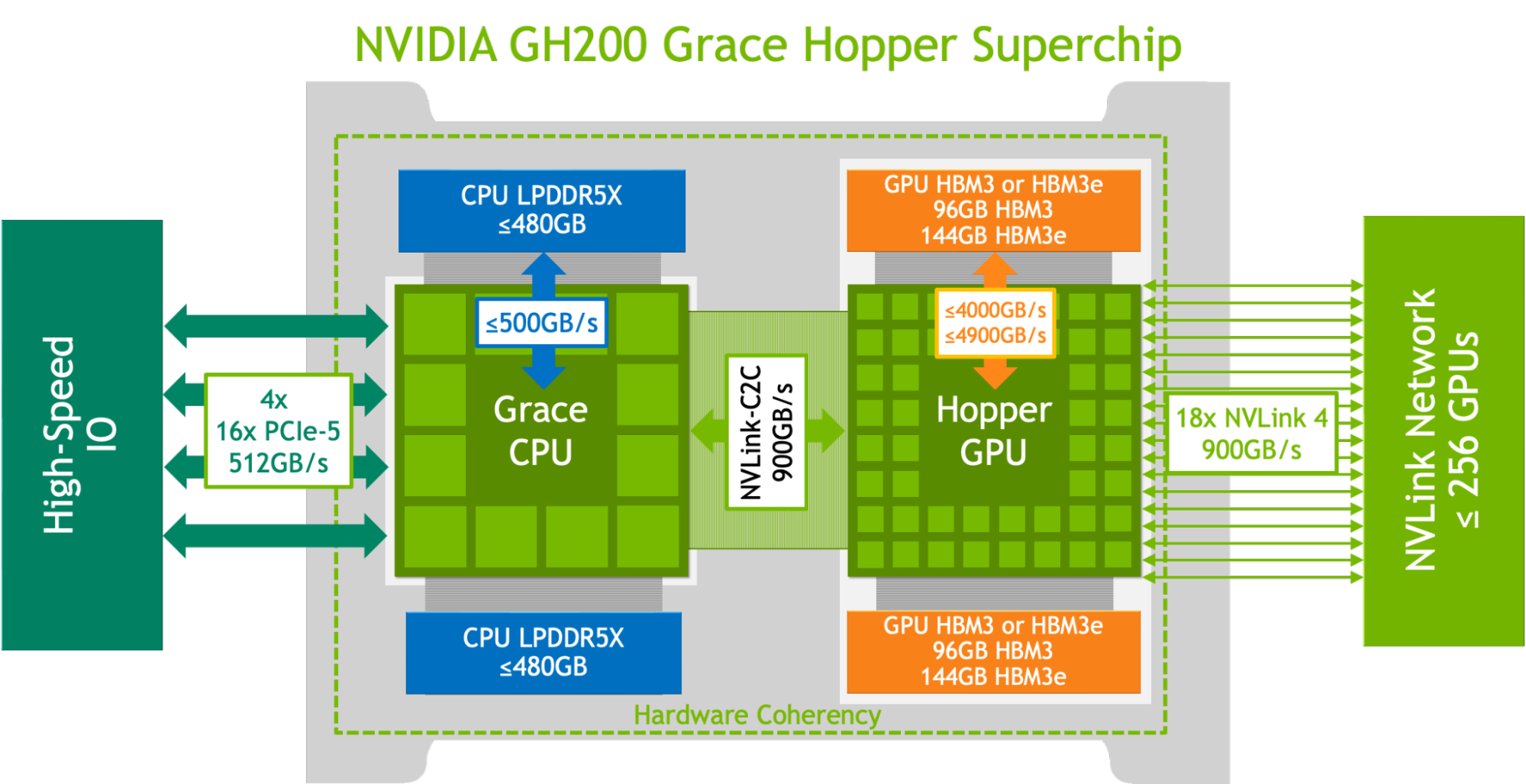

NVIDIA GH200, H100 and L4 GPUs and Jetson Orin modules show exceptional performance running AI in production from the cloud to the network’s edge.

Laurent Duhem on LinkedIn: NVIDIA Unveils Next-Generation GH200 Grace Hopper Superchip Platform for…

NVIDIA Keynote at COMPUTEX 2022, From COMPUTEX 2022, NVIDIA's accelerated computing platform is revolutionizing everything from gaming to data centers to robotics. All our #COMPUTEX, By NVIDIA

Nvidia Submits First Grace Hopper CPU Superchip Benchmarks to MLPerf

Smarter, Faster Call Center Transcription for Financial Services

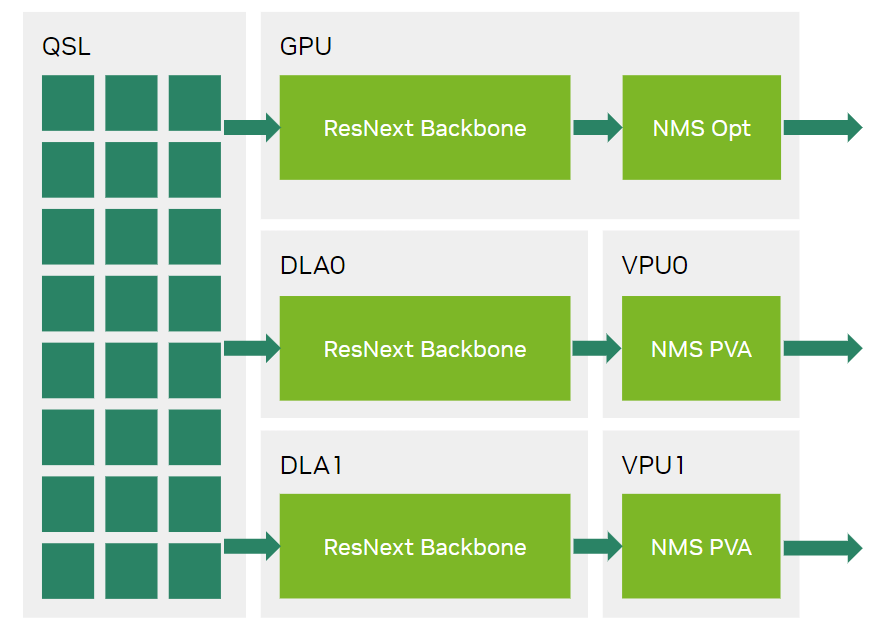

Leading MLPerf Inference v3.1 Results with NVIDIA GH200 Grace Hopper Superchip Debut

Nvidia Shows Off Grace Hopper in MLPerf Inference - EE Times

NVIDIA - With help from NVIDIA Quadro GP100 and P6000

Can Nvidia Maintain Its Position in the AI Chip Arms Race? - moomoo Community

NVIDIA Posts Big AI Numbers In MLPerf Inference v3.1 Benchmarks With Hopper H100, GH200 Superchips & L4 GPUs

Vladimir Troy on LinkedIn: NVIDIA GH200 Grace Hopper Superchip Sweeps MLPerf Inference Benchmarks

NVIDIA Grace Hopper Superchip Sweeps MLPerf Inference Benchmarks

Fabio Alves on LinkedIn: NVIDIA Grace Hopper Superchip Sweeps MLPerf Inference Benchmarks

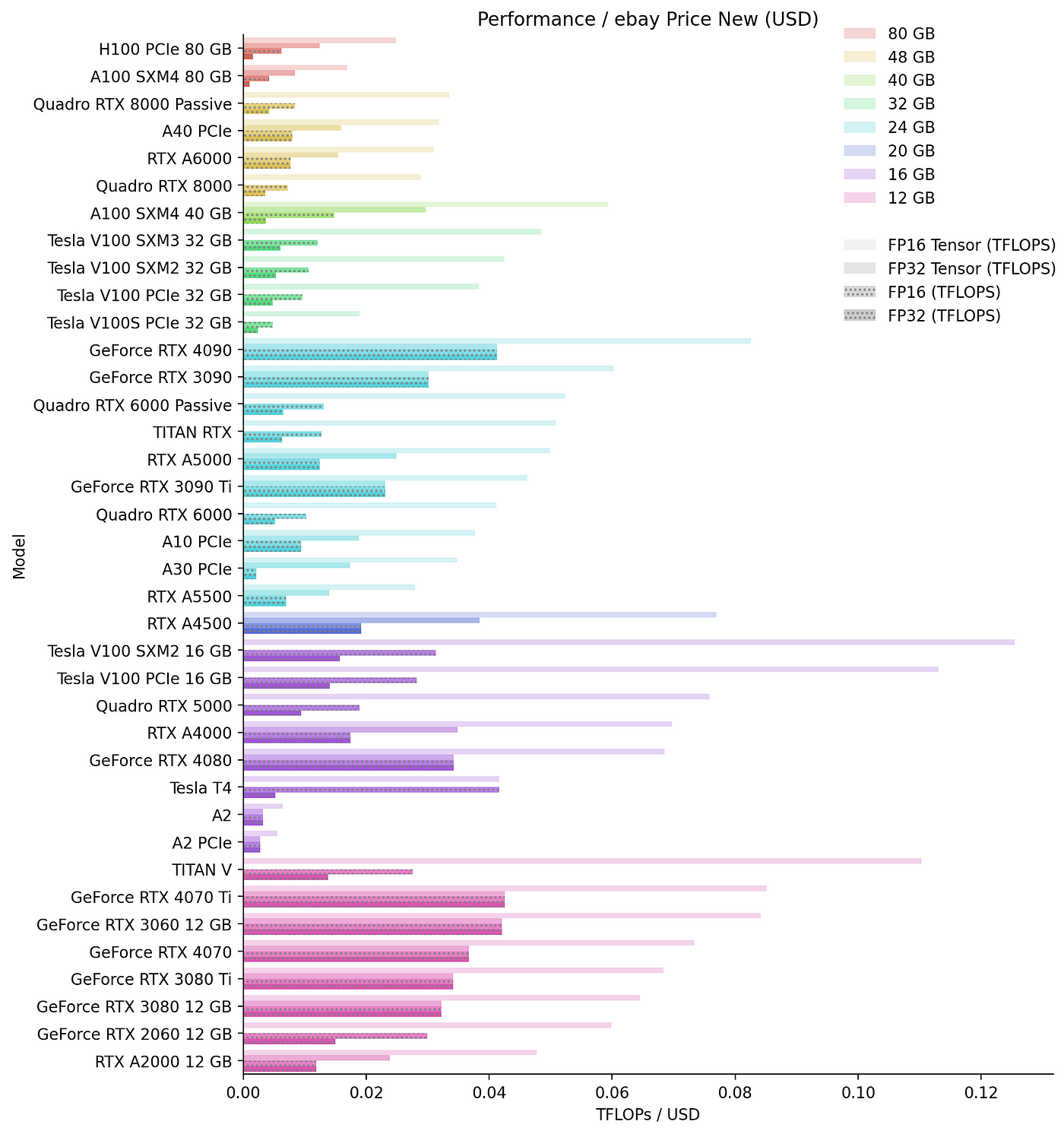

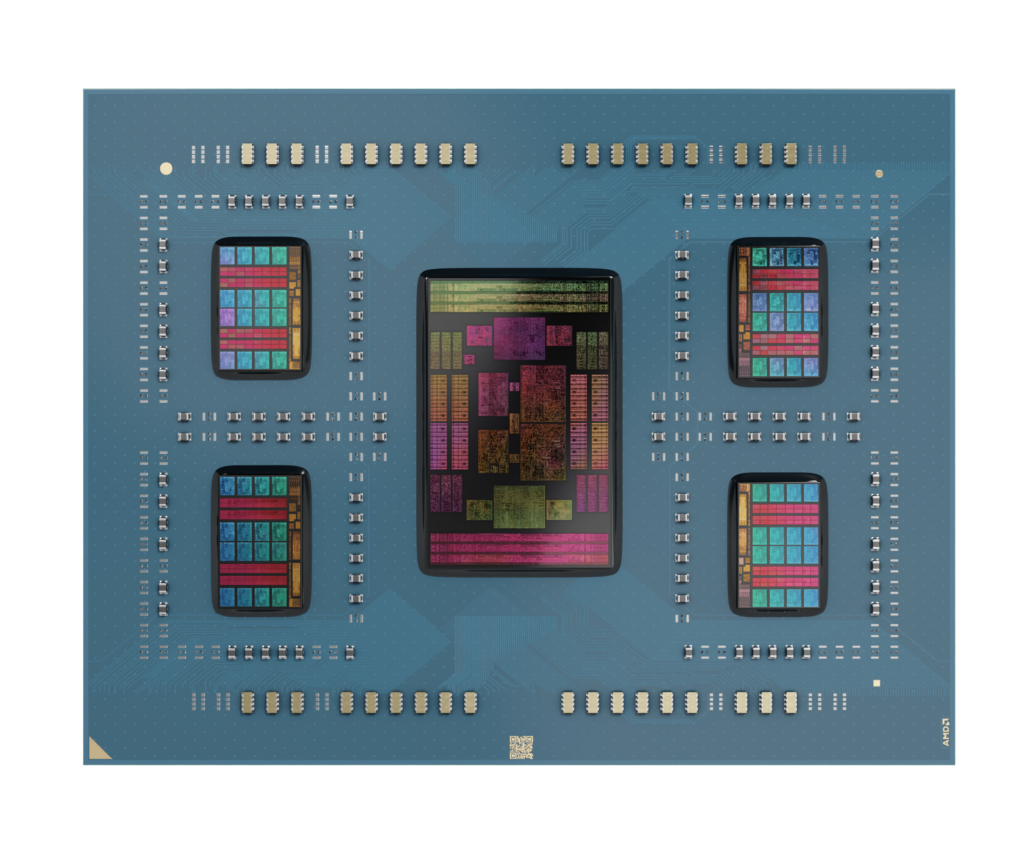

Is the CPU comparison between AMD, Intel, and Nvidia necessary?

Leading MLPerf Inference v3.1 Results with NVIDIA GH200 Grace Hopper Superchip Debut

de

por adulto (o preço varia de acordo com o tamanho do grupo)