vocab.txt · nvidia/megatron-bert-cased-345m at main

Por um escritor misterioso

Descrição

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

PCL-Platform.Intelligence/PanGu-Alpha-GPU: PanGu-Alpha 模型在 GPU 上推理和训练 - panguAlpha_pytorch/README_mgt.md at master - PanGu-Alpha-GPU - OpenI - 启智AI开源社区提供普惠算力!

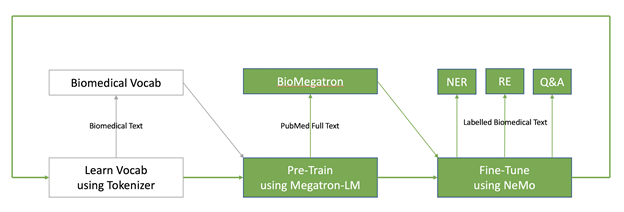

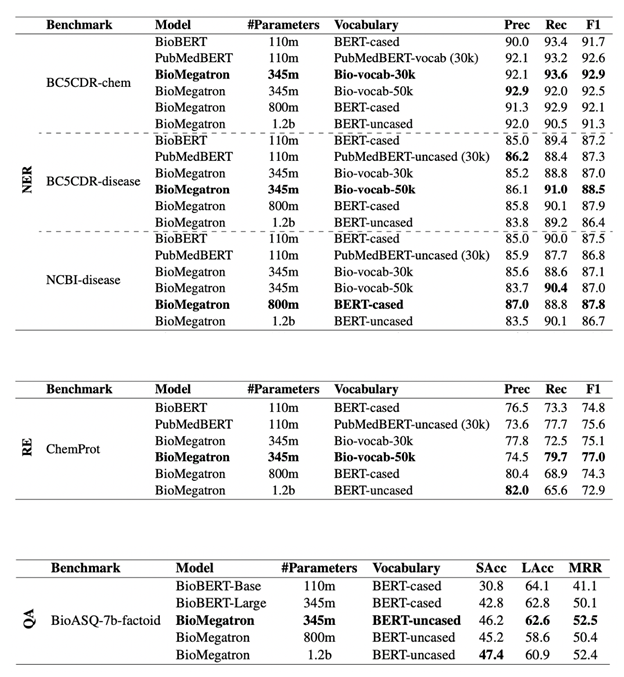

Building State-of-the-Art Biomedical and Clinical NLP Models with BioMegatron

Convert megatron lm ckpt to nemo · Issue #5517 · NVIDIA/NeMo · GitHub

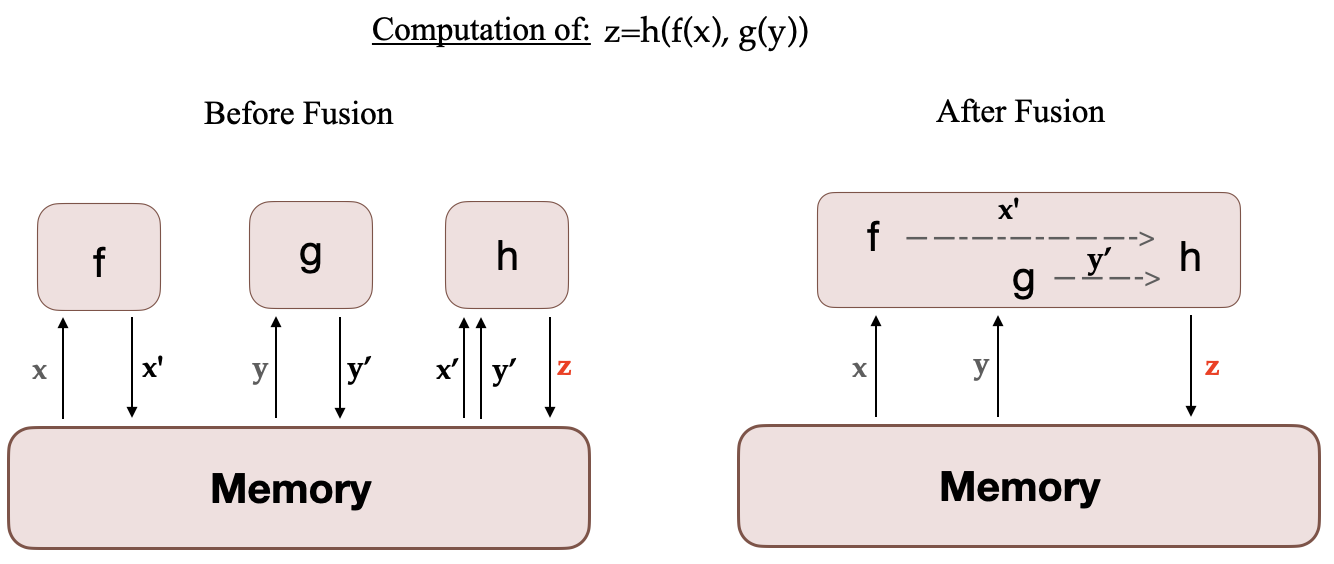

Mastering LLM Techniques: Training

pangu pytorch · 4b6e9e859b - PanGu-Alpha-GPU - OpenI - 启智AI开源社区提供普惠算力!

bert-base-uncased-vocab.txt' not found in model shortcut name list · Issue #9 · TencentYoutuResearch/PersonReID-NAFS · GitHub

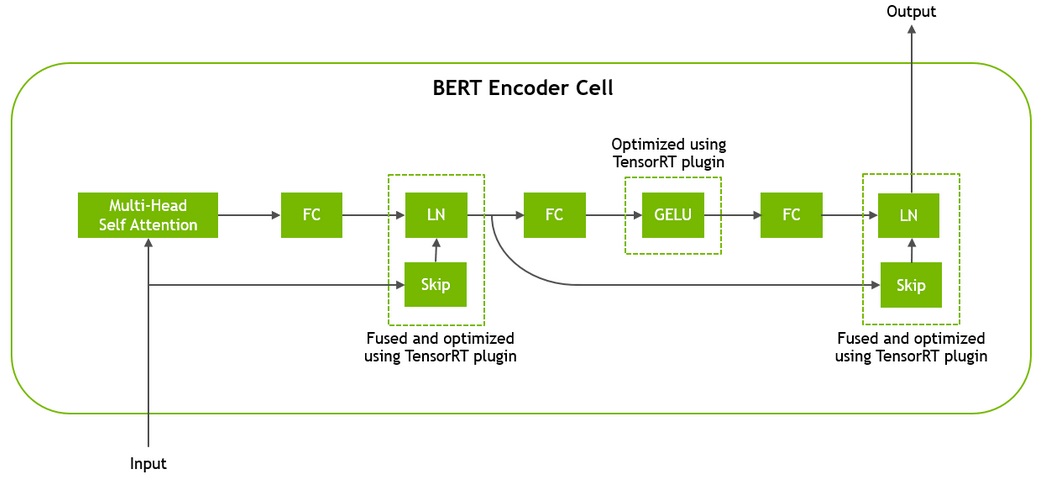

Real-Time Natural Language Understanding with BERT Using TensorRT

Real-Time Natural Language Understanding with BERT Using TensorRT

GitHub - ProjectD-AI/LLaMA-Megatron-DeepSpeed: Ongoing research training transformer language models at scale, including: BERT & GPT-2

BERT Transformers — How Do They Work?, by James Montantes

Building State-of-the-Art Biomedical and Clinical NLP Models with BioMegatron

How to train a Language Model with Megatron-LM

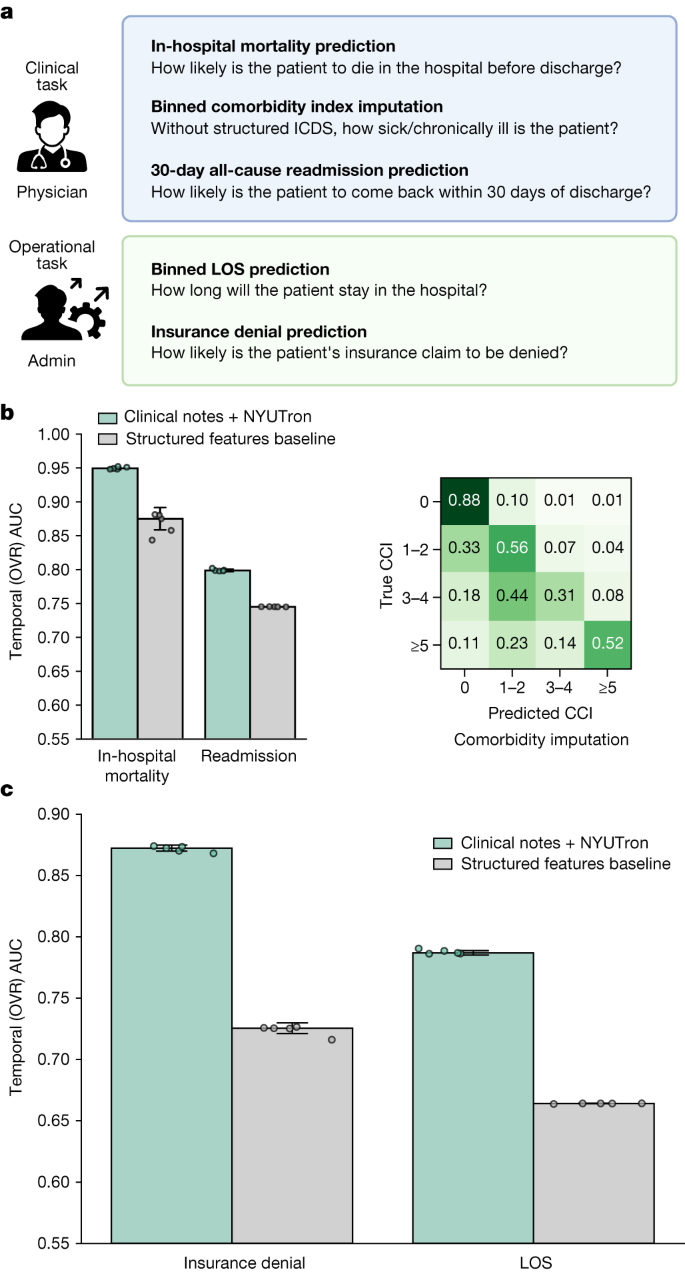

Health system-scale language models are all-purpose prediction engines

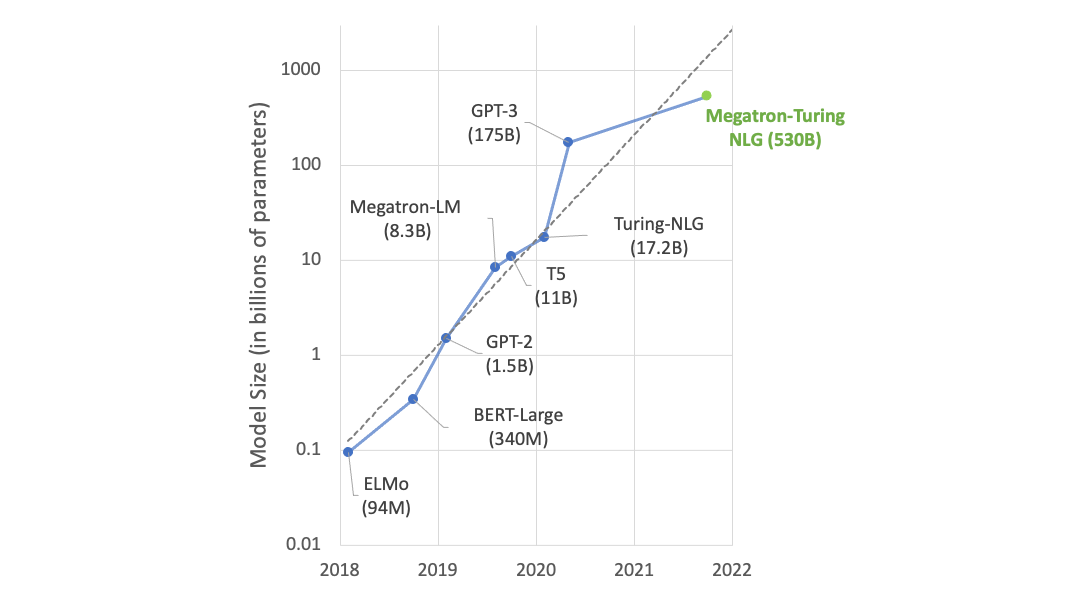

State-of-the-Art Language Modeling Using Megatron on the NVIDIA A100 GPU

de

por adulto (o preço varia de acordo com o tamanho do grupo)